AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Tensorflow swish activation12/17/2023

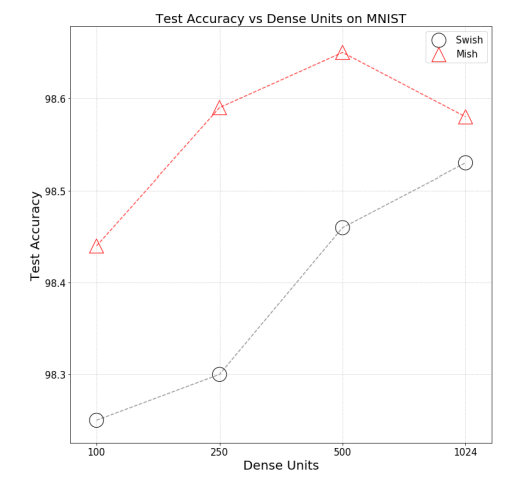

This can be a great option to save reusable code written in Keras and to prototype changes to your network in a high level framework that allows you to move quick. It is at this point TensorFlow’s website will point you to their “expert” articles and start teaching you how to use TensorFlow’s low level api’s to build neural networks without the limitations of Keras.īefore jumping into this lower level you might consider extending Keras before moving past it. All without changing any code just a configuration file.Īt some point in your journey you will get to a point where Keras starts limiting what you are able to do. Then when you are ready for production you can swap out the backend for TensorFlow and have it serving predictions on a Linux server. If using Keras directly you can use PlaidML backend on MacOS with GPU support while developing and creating your ML model. This kind of backend agnostic framework is great for developers. Although one of my favorite libraries PlaidML have built their own support for Keras. Using Keras you can swap out the “backend” between many frameworks in eluding TensorFlow, Theano, or CNTK officially. Keras is called a “front-end” api for machine learning. TensorFlow is even replacing their high level API with Keras come TensorFlow version 2. Keras is a favorite tool among many in Machine Learning. Psylocke (played by actress Mei Melancon), a mutant who possesses psionic powers, from “X-Men: The Last Stand” (2006).Implementing Swish Activation Function in Keras Michelle (played by actress Bai Ling), an assasin with a heart of gold, from “The Gene Generation” (2007). Yukio (played by actress Shiori Kutsuna), a female ninja, from “Deadpool 2” (2018). From left to right: Two fabricants (clones) from “Cloud Atlas” (2012). In science fiction movies, a colored hair swish is usually associated with a character that is ambiguous in some way. The swish() activation function is named for its shape. There are significant new developments, such as the use of the swish() activation function, being discovered all the time. The field of machine learning is very excting. So, swish() worked fine, and I beleive the research claims that swish() is superior to relu() and tanh() for very deep NNs. But when I used a learning rate of 0.02 with swish(), I got essentially the same results. Compared to the NN with tanh() and a learning rate of 0.01, the swish() version learned a bit slower. I took an existing 6-(10-10)-3 classifier I had, which used tanh() on the two hidden layers, and replaced tanh() with swish(). The demo run on the right uses swish() activation with a LR = 0.02. The demo run on the left uses tanh() activation with a LR = 0.01. So, adding what are essentially unnecessary functios to PyTorch can have a minor upside. But if swish() had been in PyTorch I would have discovered it earlier. Adding such a trivial function just bloats a large library even further. The fact that PyTorch doesn’t have a built-in swish() function is interesting. Update: I just discovered that PyTorch 1.7 does have a built-in swish() function. Z = self.oupt(z) # no softmax for multi-class # z = T.tanh(self.hid1(x)) # replace tanh() w/ swish() However, it’s trivial to implement inside a PyTorch neural network class, for example: At the time I’m writing this bog post, Keras and TensorFlow have a built-in swish() function (released about 10 weeks ago), but the PyTorch library does not have a swish() function.

The Wikipedia entry on swish() points out that swish() is sometimes called sil() or silu() which stands for sigmoid-weighted linear unit. The three related activation functions are: It’s sort of a cross between logistic sigmoid() and relu(). I made this graph of sigmoid(), swish(), and relu() using Excel. The swish() function was devised in 2017. Many variations of relu() followed but none were consistently better so relu() has been used as a de facto default since about 2015. Then relu() was found to work better for deep neural networks. In the early days of NNs, logistic sigmoid() was the most common activation function. I don’t know Thorsten personally, but he seems like a very bright and creative guy. My thanks to fellow ML enthusiast Thorsten Kleppe for pointing swish() out to me when he mentioned the similarity between swish() and gelu() in a Comment to an earlier post. I was recently alerted to the new swish() activation function for neural networks. It’s very difficult, but fun, to keep up with all the new ideas in machine learning.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed